Retail store designer vs store design software vs VR simulation: which does what

A retail leader planning a refit, a category review, or a new format pilot has three categories of resource available. A retail store designer is the human practitioner who brings creative direction and brand expression to physical form. Store design software is the planning environment that translates layouts and assortments into executable plans at scale. VR simulation puts the design into an immersive environment. Stakeholders walk through it, and shoppers behave naturally inside it, before anything is built.

Each category solves a different problem. Choosing the wrong one means spending too much for the answer the project needs. Choosing too little means discovering the gap during build. Most retail design projects need a combination. The question is which combination, and at which stage each resource comes in.

Storelab has worked across retail design and shopper research for more than 35 years. The pattern that holds across major refits, format launches, and multi-site rollouts is that integrated workflows outperform any single resource used alone. What follows maps what each category does, where each fits, and what gets missed when projects rely on only one.

What a retail store designer does

A retail store designer (a person, studio, or consultancy) brings creative direction, customer experience design, fixture specification, materials selection, lighting design, and brand expression to a project. The designer’s value sits in judgement and taste. Translating a brand strategy into a physical environment is a creative discipline. It cannot be automated.

Store designers earn their fee on hero projects: flagship stores, format launches, brand resets, and fitout for hero locations where the store itself is the brand statement. The designer makes choices about ceiling height, sightlines, material palette, and fixture proportion. No software or simulation can generate those choices from data alone.

The limitations are equally clear. A store designer engagement scales with hours and seniority. Cost climbs quickly on multi-site programmes. Translating a flagship concept across 200 stores in different formats and footprints calls for a different toolset. And a store designer cannot test outcomes before build. The concept is the artefact. Store performance is unknown until the doors open.

What store design software does

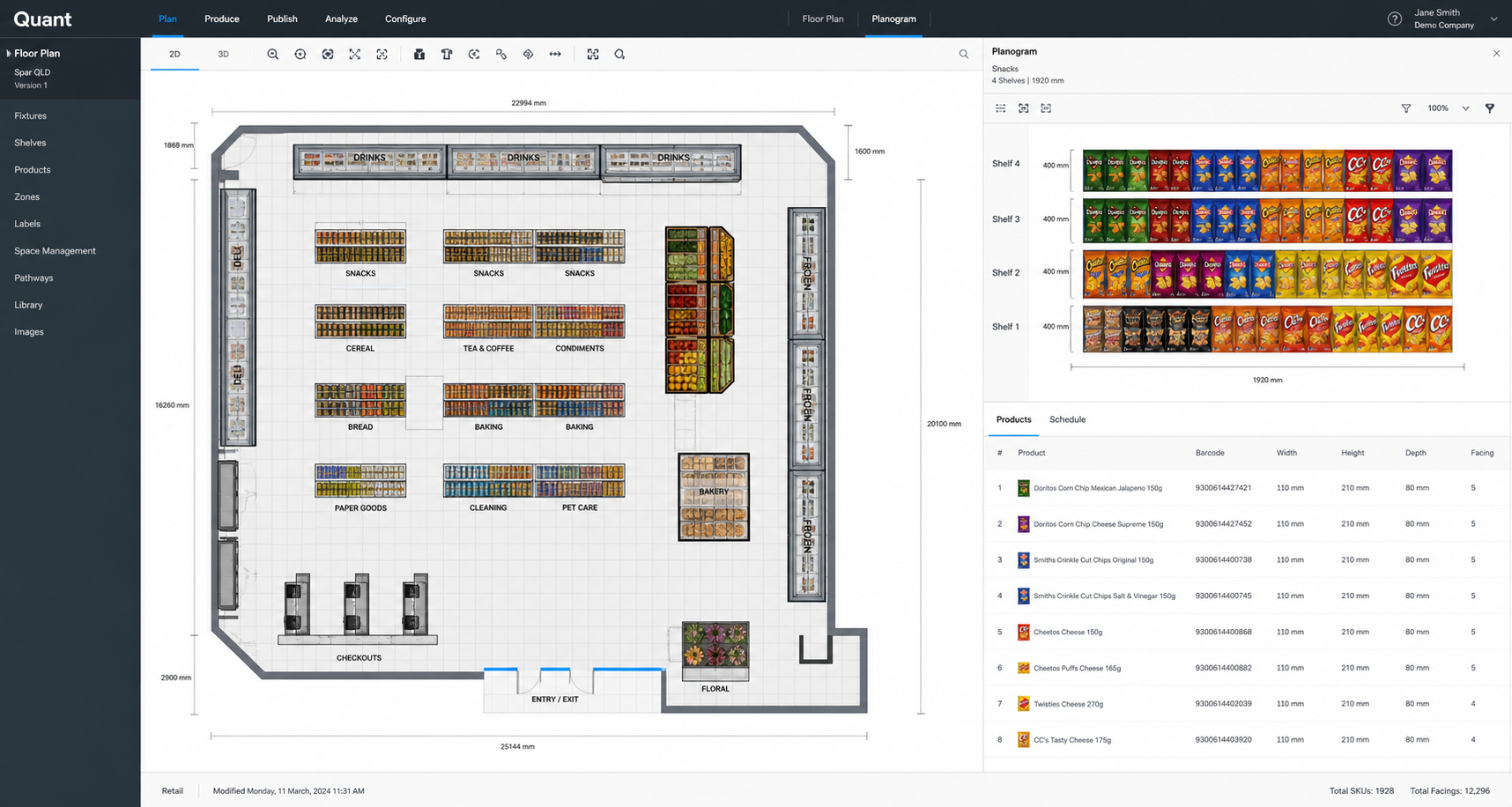

Store design software is the category of 2D and 3D planning tools that handle the operational side of retail store design at scale. The category has three vendors that procurement teams will recognise. Blue Yonder Space Planning and Floor Planning is the rebranded JDA suite. NielsenIQ Spaceman (sometimes branded NIQ Spaceman) is the other major planogram tool. AutoCAD with retail-specific extensions is widely used in fitout drawings.

The strengths of this category are scale, accuracy, and integration. Every SKU, every fixture, every store cluster can be planned, versioned, and updated against assortment and inventory data. Planogram compliance, range reviews, and store-format rollouts all run through software in any retailer of size. The artefacts are floor plans, planograms, and fixture diagrams. They flow into procurement, build, and merchandising teams.

The limitations are well understood inside the category. A 2D representation does not capture how a shopper experiences a store. Stakeholder approval often stalls when the only artefact is a top-down floor plan or a planogram diagram. Senior decision-makers are evaluating something they cannot mentally walk into. The software measures inputs: space allocation, facings, adjacencies. It does not measure behavioural outcomes.

What VR simulation does

VR simulation places a layout, planogram, or fixture into an immersive virtual environment. Stakeholders navigate it. Shoppers behave inside it for controlled studies. The output is twofold. Stakeholders see the store rather than a floor plan, which speeds approvals on subjective design decisions. Shoppers in studies generate behavioural data on a layout that has not yet been built. The data covers eye-tracking, dwell, navigation patterns, and pick-up behaviour.

The strengths sit in three places. Stakeholder approval cycles compress because the artefact is closer to the customer experience. Behavioural data capture happens at the design stage, with results available before construction commitment. And on multi-site rollouts, parallel testing of design variants catches mistakes before they reach construction. The worst-case rollout outcome is one mistake repeated 200 times. Validation reduces that risk.

VR simulation has limitations that brands and retailers need to be clear-eyed about. It does not generate creative direction. It tests options the project has already brought to the table. It does not replace planogram production at scale. Producing every planogram for every store in a 1,500-store network is not what the category is for. And VR validation needs source assets to test. The project must already have CAD floor plans, planogram data, or fixture models. Storelab is a testing and validation platform. It complements creative and software workflows. It is honest about what falls outside that scope.

How the three categories compare

The three categories are complementary. The mistake is treating them as alternatives. The table compares the three across seven dimensions that matter when scoping a retail design project.

| Dimension | Retail store designer | Store design software | VR simulation |

|---|---|---|---|

| Creative direction | Core capability | Not in scope | Not in scope |

| Spatial planning at scale | Limited; scales with hours | Core capability | Limited; validation only |

| Pre-build validation | Concept review only | 2D layout review | Core capability |

| Multi-site rollout consistency | Difficult to scale | Core capability | Validation across variants |

| Behavioural data capture | Inferred from experience | None | Core capability |

| Cost order of magnitude | Project fees: six to seven figures | Annual licensing: five to six figures | Per-study: five to six figures |

| Time to insight | Weeks to months | Days for compliance, weeks for review | Days to weeks for behavioural data |

The cost figures are order-of-magnitude framings. Engagement scope, market, and seniority of designer drive significant variation. The point of the column is the relative scale, not a budgeting figure.

Which resource fits which project

The four common project types below map to the right combination of resources. The pattern is that no single project is well-served by only one category.

Flagship store or new format launch

A flagship store or a new format pilot is the project type where a retail store designer earns their fee. The creative direction shapes the brand expression in physical form. VR validation belongs at the concept-approval stage. Stakeholders walk through the store before commitment to build. The design team gets behavioural data on the proposed layout. Storelab’s Connect product handles layout visualisation in immersive form for this stage. Store design software comes in once the format is approved, for fixture and planogram production.

Multi-site rollout

A 200-store rollout is the project type where store design software does the heavy lifting. Every fixture, every planogram, every store-format variant runs through the planning environment. VR validation belongs at the format approval stage. Catching one mistake at validation and removing it from 200 sites is the highest-value VR application in retail. The store designer’s role on a rollout is typically format-level, not per-site.

Category or range review

A category review is a software-led project. Planogram production, assortment changes, and adjacency decisions all live in the planning environment. VR fits at the stakeholder approval stage. The executive team is evaluating a recommendation that is hard to assess from a planogram diagram alone. Storelab’s Storyteller product generates video walkthroughs from the VR environment for stakeholder presentations. That compresses the approval cycle on category submissions.

Packaging or shelf-impact test

A packaging or shelf-impact test is a VR-led project. Behavioural data capture is the central artefact: eye-tracking, fixation, pick-up rate. Store design software produces the fixture context in which the test runs. A retail store designer is rarely involved at this scale. The project tests pack performance inside an already-defined retail environment.

What gets missed when one resource is used alone

Each category has a known failure mode when used in isolation.

A store designer working without VR validation produces concepts that may or may not perform on the day. Customer flow, sightlines, fixture proportion, and adjacency choices look correct on a render pad. Some of them turn out to be wrong once shoppers are in the store. Fixing a flagship after opening costs several multiples of catching the issue at the design stage.

A software-only workflow produces planograms and floor plans that look correct on screen but feel wrong in store. Shoppers navigate by sightlines, brand blocks, and category cues. None of those appear in a top-down view. Executive approval cycles stall on planogram diagrams because the artefact does not represent the experience the customer will have.

VR alone, without creative direction, tests options that should not have been on the table in the first place. Behavioural data on a poorly conceived layout is still poor layout data. VR is a validation environment. It needs strong inputs to produce useful outputs.

Where this is heading

Leading retailers are moving from sequential workflows to parallel ones. The old pattern was designer hands to software, software hands to build. The new pattern runs validation throughout. The shift is driven by capital efficiency on multi-site programmes and by the cost of getting subjective design decisions wrong at scale. Each resource still does what it has always done. The discipline that has changed is when in the project lifecycle each is brought in, and how their outputs feed back into the others.

For retail teams running a refit, a format launch, or a category review in the next twelve months, the practical step is a workflow audit. Map which decisions the project will make. Map which artefact each decision will be made from. Map which category of resource is producing that artefact. The audit usually reveals where validation has been skipped, or where one category is being asked to do work that belongs to another. Storelab can run a benchmark assessment on how a planned project would map across the three categories. The aim is to identify the decisions that need stronger inputs before commitment, and to match each decision to the right resource.